A friend of mine contacted me asking my opinion on why Google isn’t loving Celebrity Cowboy. Celebrity Cowboy is a celebrity blog that should be ranking well for a variety of terms is, for some reason, continually under-performing for its niche.

I told him that I would take a look at it, and while my speciality isn’t really search engines, I did notice a few things right off the bat.

Code

Positioning

One of the first things I noticed about the xhtml generated by the theme used at Celebrity Cowboy is that the blogroll is near the top of the page, with more than twenty items linking out to other sites. While this is only on the front page of the site now, it wasn’t always like this and could have lead to a black mark for the site.

Then there is the content, and then the list of internal links to each one of the more than two dozen categories. Could Google be penalizing the site for having so many outbound links at the top of the page of code, and so many links near the bottom? Could they see this as an attempt to effect search engine rankings by stuffing links in a site?

Things like this have happened before and Google has always been harsh on such things. The flip side though is that all of these links are relevant. Google doesn’t penalize for relevant links, do they?

With Google’s war against paid links, I would be surprised if a few sites got caught in the crossfire, and with these links being site-wide, Google may have mistaken them as paid links.

No doubt they would like sites to make sure to no-follow their blogrolls and other external links that aren’t part of the normal daily content, despite being relative.

Validation

The theme that Celebrity Cowboy is using doesn’t validate. Google has proved time and time again that if you don’t work hard on making your code valid, you can cause yourself to drop in the rankings, and even sometimes to be marked as a “bad” site.

Sometimes sites get listed on stopbadware.org just because their JavaScript doesn’t work correctly, or advertising doesn’t load properly. I have seen this happen to more than a few sites.

Fixing up as many validation issues as possible, could help remove the penalty placed on the site, as Google’s indexing bots might then be able to index the content more efficiently, and without error.

One of the things I first noticed was that there is an ID used more than once, something that probably doesn’t effect the Google search bots, but something that is not correct in xhtml. Classes should be used for repeating items, not ID’s.

Correcting such things should also improve how various browsers render the site, which could have the side effect of increasing traffic, page views, and even links to the blog.

Just Plain Strange

There was one more thing about the coding of the site that really got me scratching my head. It seems that the header image is displayed via CSS, and so rather than showing an image with the proper hyperlink code around it, the coder chose to use JavaScript to make the div that the header is shown thanks to, into a clickable item that uses location.href to bring the visitor back to the index page.

To me this seems like a very bad way to do this effect, and probably not one that Google looks highly on.

Content

One issue that Google has with many sites, especially celebrity sites is “thin content”. They constantly adjust rankings based on this issue. So many articles on Celebrity Cowboy have less than one hundred words, and this can make Google cranky.

An example of a post that has really thin content is the George Clooney Reacts to Nicole Kidman’s Pregnancy post. There are less than two dozen words here, and an image. Surely the writer could give a few more points about both George Clooney, Nicole Kidman, and older actresses being pregnant.

I suggest increasing the number of articles that include over a one hundred words, reducing blockquotes from other sites, lists of external links, and other content which doesn’t increase the usefulness of Celebrity Cowboy.

There should be at least one hundred words of fresh, original content in as many articles as possible.

This is made worse when you remove information around a single post. Remove images, external links, advertisements, repeated content like the popular articles list, and about text, and you are left with very little actual content for Google to sift through, with a very high amount of code.

Another way of reducing this thin content issue, on the front page, and each subsequent page and archive area is to increase the amount of stories shown per page, and while this might not be as important on the front page, it is definitely an issue on other pages, and archive areas where only summaries are shown.

There is a plug-in for WordPress that allows you to change how many posts are shown depending on where the user is on the site. I would suggest enacting this plug-in, and increasing each page to between fifteen and twenty-five posts. While this will make pages longer, it will mean more content per page for Google to see, and the increase in code and loading times should be negligible.

Comments

Another suggestion on how to help your blog would be to find a way to increase user participation in the form of comments. You could highlight the person who has commented the most, or feature the best comment of the week. The cost would be an outbound link, but the reward could be more comments, which can help keep a post fresh in the eyes of search engines, and contribute to the content indexed on the page.

I have actually ranked fairly high for a keyword that I didn’t write, but instead it was because a person commented and Google saw it.

Links

Just like the “thin content” penalty that Celebrity Cowboy may be getting, another issue might be the sheer number of links on each and every page. Also, in talking to the owner of the site, it seems that at one point they were both selling text link advertisements and promoting unrelated websites, neither of which Google looks very kindly on.

Content Scrapers

We all know what it means to deal with spam blogs, but it looks like Celebrity Cowboy has been targeted hard. A search on the popular search engines for specific titles show some very interesting results. It seems that Google has basically given in to content scrapers in this case, and Celebrity Cowboy is nowhere to be seen.

Something that might help identify content scrapers is to make sure you use plugins for WordPress that allow you to put a copyright notice before the content only in RSS. Also, Feedburner should list people using your content for bad things. Then it is just a matter of “nicely” asking them to stop or using htaccess to block their server’s IP address from even seeing the feed.

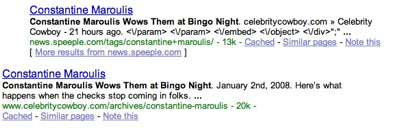

A good example of what is happening in regards to the content scrapers is when you take an article, with a unique title and search for it on Google.

You will see a the post that was made on Celebrity Cowboy about Constantine Maroulis showing up on a content scraper site, before Celebrity Cowboy, where it was originally written. This unfortunately seems to be happening on nearly every post.

Images

One of the things I noticed on a quick view of the code being generated is that on each image and embedded item in the content, there is extra style being added to remove padding, margins and borders. This is only beefing up the amount of code that Google sees, and contributing to the “thin content” problem I mentioned earlier.

This is most likely being added thanks to the WYSIWYG editor built into WordPress.

Advertising

Recently, there was a big issue with sites selling text link advertisements, and Google took steps to persuade people to stop selling such links, and while Celebrity Cowboy no longer has paid text links on the site, Google may not have fully restored the site in their search engine rankings.

I haven’t heard of anyone else really having such issues, in fact, most people that saw their PageRank drop due to a penalty for selling links, didn’t notice any shift in their search engine results.

Context

When a site changes both in design and server, which Celebrity Cowboy has, I am sure that Google “raises and eyebrow”. Google, or at least the program that indexes our blogs probably asks itself, “has the site been sold, or changed so much that we should re-index it? Is it really the same site we have come to know and love, or should we put it in the sandbox for a while?”

Surely, with all the changes that Google has seen happen to the site, it isn’t going to carry on without giving it an extra dose of scrutiny. Unfortunately, if there was some extra weight behind the site in the past, it may have lost this through changing IP addresses. I haven’t heard of this happening before, but it doesn’t mean it isn’t a possibility.

Google has done some strange things in the past, and Celebrity Cowboy isn’t the only example of something like this happening. You could be doing everything right for a long period of time, yet something changes with Google, and you are pushed so far down the rankings, that you almost have to start again with a new site.

Steps Already Taken

Over on Celebrity Cowboy, they have been trying very hard to take every step they can think of to rectify their search engine problem, and here are just a few that were mentioned to me:

- Changed the blogroll to only show up on the front page

- Show categories in the right column after the content

- Only show categories with at least 5 posts

- Removed all text link ads

- Removed all unrelated, self-owned cross promotion

- Cleaned up theme code to remove unnecessary tags

- Worked on building more links within the celebrity niche

It seems like many steps have been taken, but the search engines, especially Google, haven’t changed their view towards Celebrity Cowboy. Could it be a waiting game now, as the last major change was changing servers in the middle of November 2007? I doubt it. I am positive that there needs to be more work done on the site in order for the search engine penalty to be removed.

Suggestion Rundown

- Get your code to validate

- Increase content per post

- Promote people that comment

- Decrease outbound links

- Fight content scrapers

- Deal with garbage code being added to posts

- Nofollow all links that are not in your content, or part of your own site

There is no reason why Google would prefer content scrapers over the original content providers, and I hope this is just an error on their part that they will eventually fix, but the reality is that their mistakes effect bloggers and business owners, and if they don’t get better at providing helpful, valuable, and correct search results, people will eventually move somewhere that does.

For Celebrity Cowboy, and other sites that have been similarly effected, I know this can be very frustrating, but the best thing you can do is complain loudly, and hope that the Internet backs you up in your fight to be properly considered in the major search engines.

Hopefully, this will change soon and that the tips I have listed will help enough to at least be ranked higher than the scrapers.

Originally posted on January 9, 2008 @ 9:36 am